- ☐ Add visuals of the Mangrove3D point cloud and LiDAR virtual spheres

Through the Perspective of LiDAR: A Feature-Enriched and Uncertainty-Aware Annotation Pipeline for Terrestrial Point Cloud Segmentation

Overview

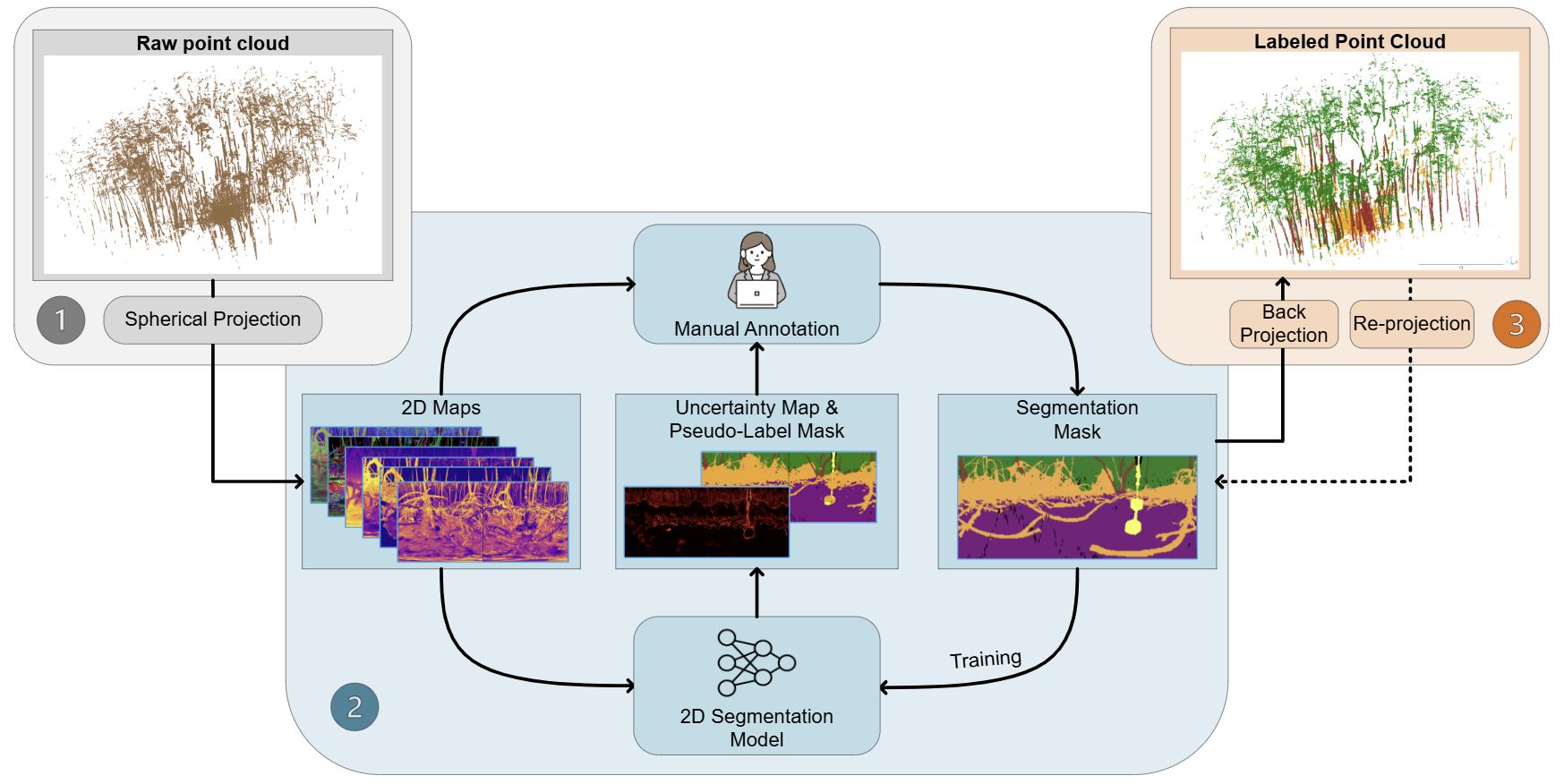

Semantic segmentation of terrestrial LiDAR data is often limited by the cost of manual labeling. We address this by projecting 3D scans into a 2D spherical space, where models can efficiently learn and guide the annotation process. This leads to high-quality results with substantially less human effort.

Approach

3D → 2D projection → uncertainty-guided annotation → back to 3D

BibTeX

@article{ZHANG2026141,

title = {Through the perspective of LiDAR: A feature-enriched and uncertainty-aware annotation pipeline for terrestrial point cloud segmentation},

journal = {ISPRS Journal of Photogrammetry and Remote Sensing},

volume = {236},

pages = {141-161},

year = {2026},

issn = {0924-2716},

doi = {https://doi.org/10.1016/j.isprsjprs.2026.03.033},

url = {https://www.sciencedirect.com/science/article/pii/S0924271626001474},

author = {Fei Zhang and Rob Chancia and Josie Clapp and Amirhossein Hassanzadeh and Dimah Dera and Richard MacKenzie and Jan {van Aardt}}

}

This page may be updated occasionally as the project progresses. Last updated: March 27, 2026